P7.004 : Xu hướng Công nghệ 2018 - Blockchain, API

Phần 4 của loạt bài viết về Xu hướng Công nghệ 2018 gồm có: 6. Blockchain 7. API: Application Programming Interfaces . Giao diện...

Phần 4 của loạt bài viết về Xu hướng Công nghệ 2018 gồm có:

6. Blockchain

7. API: Application Programming Interfaces .

Giao diện lập trình ứng dụng

Xem Báo cáo Công nghệ 2018

Tóm tắt,

6. Từ Blockchain đến rất nhiều Blockchain: Được chấp nhận rộng rãi và Tích hợp vào nhiều lĩnh vực nhất có thể

Companies should look to standardize the technology, talent, and platforms that will drive future initiatives—and, after that, look to coordinate and integrate multiple blockchains working together across a value chain.

public and private sector organizations might use it to share information selectively and securely with others, exchange assets, and proffer digital contracts. Individuals could use blockchain to manage their financial, medical, and legal records—a scenario in which blockchain might eventually replace banks, credit agencies, and other traditional intermediaries as the gatekeeper of trust and reputation.

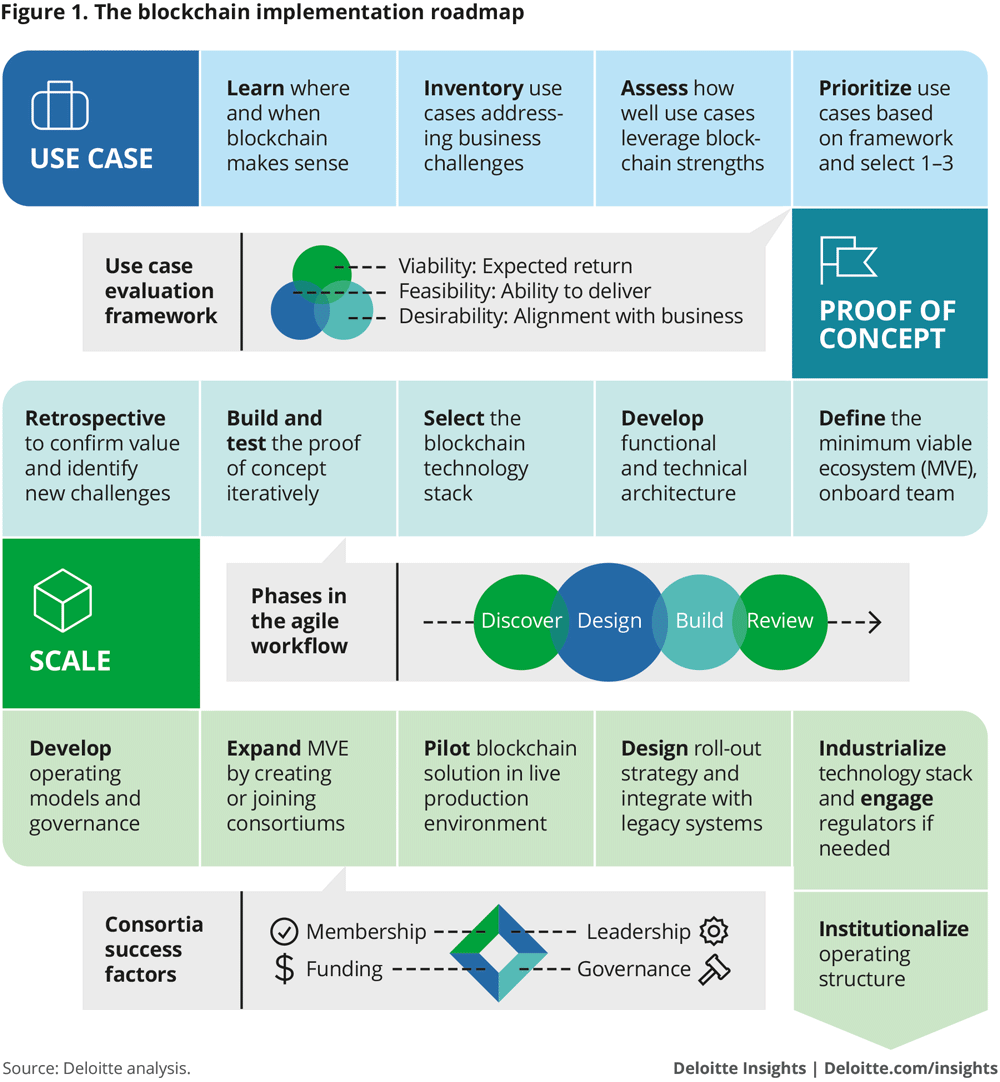

Blockchain is now finding applications in every region and sector. For example:

- Europe’s largest shipping port, Rotterdam, has launched a research lab to explore the technology’s applications in logistics.3

- Utilities in North America and Europe are using blockchain to trade energy futures and manage billing at electric vehicle charging stations.4

- Blockchain is disrupting social media by giving users an opportunity to own and control their images and content.5

- Blockchain consortiums—including the Enterprise Ethereum Alliance, Hyperledger Project, R3, and B3i—are developing an array of enterprise blockchain solutions.

This list is growing steadily as adopters take use cases and PoCs closer to production and industry segments experiment with different approaches for increasing blockchain’s scalability and scope.

In the latest blockchain trend that will unfold over the next 18 to 24 months, expect to see more organizations push beyond these obstacles and turn initial use cases and PoCs into fully deployed production solutions.

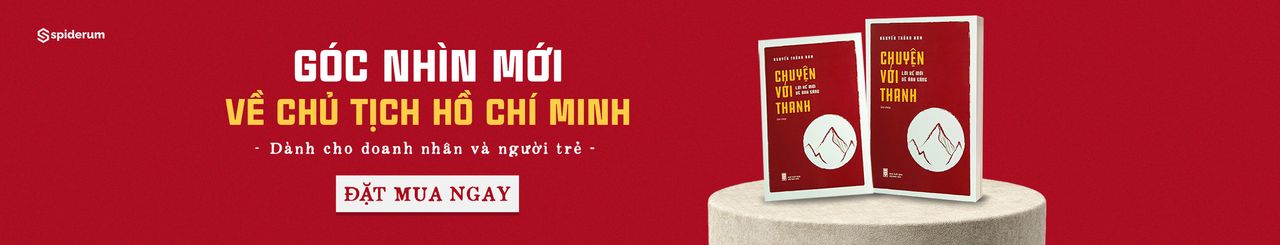

three approaches that, together, comprise the latest blockchain trend:

- Focus blockchain development resources on use cases with a clear path to commercialization

- Push for standardization in technology, business processes, and talent skillsets

- Work to integrate and coordinate multiple blockchains within a value chain

Because we are only now coming to the end of a hot blockchain hype cycle, many people assume that enterprise blockchain adoption is further along than it actually is. In reality, it will take time and dedication to get to large-scale adoption. But when it does arrive, it will be anchored in the strategies, unique skillsets, and pioneering use cases currently emerging in areas such as trade, finance, cross-border payments, and reinsurance.

Treading the path to commercialization

By answering the following questions, CIOs can assess the commercial potential of their blockchain use cases:

- How does this use case enable our organization’s strategic objectives over the next five years?

- What does my implementation roadmap look like? Moreover, how can I design that roadmap to take use cases into full production and maximize their ROI?

- What specialized skillsets will I need to drive this commercialization strategy? Where can I find talent who can bring technical insight and commercialization experience to initiatives?

- Is IT prepared to work across the enterprise (and externally with consortium partners) to build PoCs that deliver business value?

One final point to keep in mind: Blockchain use cases do not necessarily need to be industry-specific or broadly scoped to have commercial potential. In the coming months, as the trend toward mass adoption progresses, expect to see more use cases emerge that focus on enterprise-specific applications that meet unique value chain issues across organizations. If these use cases offer potential revenue opportunities down the road—think licensing, for example—all the better.

Next stop, standardization

Consider standardization’s potential benefits—none of which companies developing blockchain capabilities currently enjoy:

- Enterprises would be able to share blockchain solutions more easily, and collaborate on their ongoing development.

- Standardized technologies can evolve over time. The inefficiency of rip-and-replace with every iteration could become a thing of the past.

- Enterprises would be able to use accepted standards to validate their PoCs. Likewise, they could extend those standards across the organization as production blockchains scale.

- IT talent could develop deep knowledge in one or two prominent blockchain protocols rather than developing basic knowhow in multiple protocols or platforms.

For CIOs, this presents a pressing question: Do you want to wait for standards to be defined by your competitors, or should you and your team work to define the standards yourselves?

financial services giant JP Morgan Chase. In 2017, the firm launched Quorum, an open-source, enterprise-ready distributed ledger and smart contracts platform created specifically to meet the needs of the financial services industry. Quorum’s unique design remains a work in progress: JP Morgan Chase invited technologists from around the world to collaborate to “advance the state of the art for distributed ledger technology.

Internally, CIOs can empower their teams to make decisions that drive standards within company ecosystems. Finally, in many organizations, data management and process standards already exist. Don’t look to reinvent the wheel. Apply these same standards to your blockchain solution.

Integrating multiple blockchains in a value chain

In the future, blockchain solutions from different companies or even industries will be able to communicate and share digital assets with each other seamlessly.

Unfortunately, many of the technical challenges preventing blockchain integration persist. Different protocols—for example, Hyperledger Fabric and Ethereum—cannot integrate easily. Think of them as completely different enterprise systems. To share information between these two systems, you would need to create an integration layer (laborious and painful) or standardize on a single protocol.

the Hyperledger Foundation and others are working to establish technical standards that define what constitutes a blockchain, and to develop the protocols required to exchange assets

Skeptic’s corner

Misconception: Standards must be in place before my organization can adopt a production solution.

Reality check: Currently, there are no overarching technical standards for blockchain, and it is unrealistic to think we will get them soon, if ever, across all use cases.

These use case-based standards are established, if not commonly accepted, which means you may not have to wait for universal standards to emerge before adopting a blockchain production solution.

Misconception:

quantum computing may completely invalidate blockchain

Reality check: That is a possibility, but it may never happen.

blockchain technologies will continue to evolve in ways that accommodate quantum’s eventual impact—for better or worse—on encryption.

Misconception: Blockchain is free,

Reality check: Not quite. While most blockchain codes are open-source and run on low-cost hardware and public clouds, the full integration of blockchains into existing environments will require both resources and expertise, which don’t come cheap. Blockchain technologies, like the systems and tools that users need to interact with them, require IT maintenance and support. blockchain platforms will likely run in parallel with current platforms, which may add short-term costs.

Lessons from the front lines

LINKING THE CHAINS

In October 2016, global insurance and asset management firm Allianz teamed up with several other insurance and reinsurance organizations to explore opportunities for using blockchain to provide client services more efficiently, streamline reconciliations, and increase the auditability of transactions.

the joint effort—the Blockchain Insurance Industry Initiative (B3i)—welcomed 23 new members from across the insurance sector and began market-testing a new blockchain reinsurance prototype

A broader opportunity looms large above Allianz’s blockchain initiatives as well as those underway in other industries: integrating and orchestrating multiple blockchains across a single value chain.

the B3i use case is laying the groundwork for future collaboration and even standardization across the insurance sector.

BLOCKCHAIN BEYOND BORDERS: THE HONG KONG MONETARY AUTHORITY

The Hong Kong Monetary Authority (HKMA) is the central banking authority responsible for maintaining the monetary and banking stability and international financial center status of Hong Kong.

exploring blockchain’s or distributed ledger technology’s (DLT) potential for a variety of financial applications and transactions.

The proof of concept focused on trade finance for banks, buyers and sellers, and logistics companies. It leveraged DLT to create a platform for automating labor-intensive processes via smart contracts, reducing the risk of fraudulent trade and duplicate financing, and improving the transparency and productivity of the industry as a whole. DLT provided immutable data integrity, enhanced reliability with built-in disaster recovery mechanisms, enabled near-real-time updates of data across the nodes, and acted as a repository for transactional data.

With seven banks now participating in the trade finance blockchain, HKMA intends to launch a production pilot in the second half of 2018. It plans to have a full commercialized solution in production by 2019. Also, there are a number of other banks waiting in the queue to participate in this platform.

HKMA is exploring interconnectivity between blockchains with Singapore’s government and Monetary Authority of Singapore (MAS), which could be the foundation of an international blockchain ecosystem.

My take

PETER MILLER, PRESIDENT AND CEO

THE INSTITUTES

THE INSTITUTES

Over the last 108 years, The Institutes has supported the evolving professional development needs of the risk management and insurance community with educational, research, networking, and career resource solutions.

For our industry, blockchain has the capacity to streamline payments, premiums, and claims; reduce fraud through a centralized record of claims; and improve acquisition of new policyholders by validating the accuracy of customer data.

We’ve formed The Institutes RiskBlock Alliance, the first nonprofit, enterprise-level blockchain consortium. It will bring together risk management and insurance industry experts and blockchain developers to research, develop, and test blockchain applications for industry-specific use cases.

the need to communicate to multiple blockchains and enable federated inter-blockchain communication to facilitate reuse of capabilities among 30 organizations from various industry segments.

To start, we are tackling four use cases that technology has struggled to tame: proof of insurance, first notice of loss, subrogation, and parametric insurance.

To facilitate adoption, organizations need to advance along the learning curve and focus on the business problems that blockchain could solve.

Risk implications

three categories: common risks, value transfer risks, and smart contract risks.

COMMON RISKS

Blockchain technology exposes institutions to similar risks associated with current business processes—such as strategic, regulatory, and supplier risks—but introduces nuances for which entities need to account.

VALUE TRANSFER RISKS

Because blockchain enables peer-to-peer transfer of value, the interacting parties should protect themselves against risks previously managed by central intermediaries.

SMART CONTRACT RISKS

Smart contracts can encode complex business, financial, and legal arrangements on the blockchain, so there is risk associated with the one-to-one mapping of these arrangements from the physical to the digital framework.

the Financial Industry Regulatory Authority has shared operational and regulatory considerations for developing use cases within capital markets. Organizations should work to address these regulatory requirements in their blockchain-based business models and establish a robust risk-management strategy, governance, and controls framework.

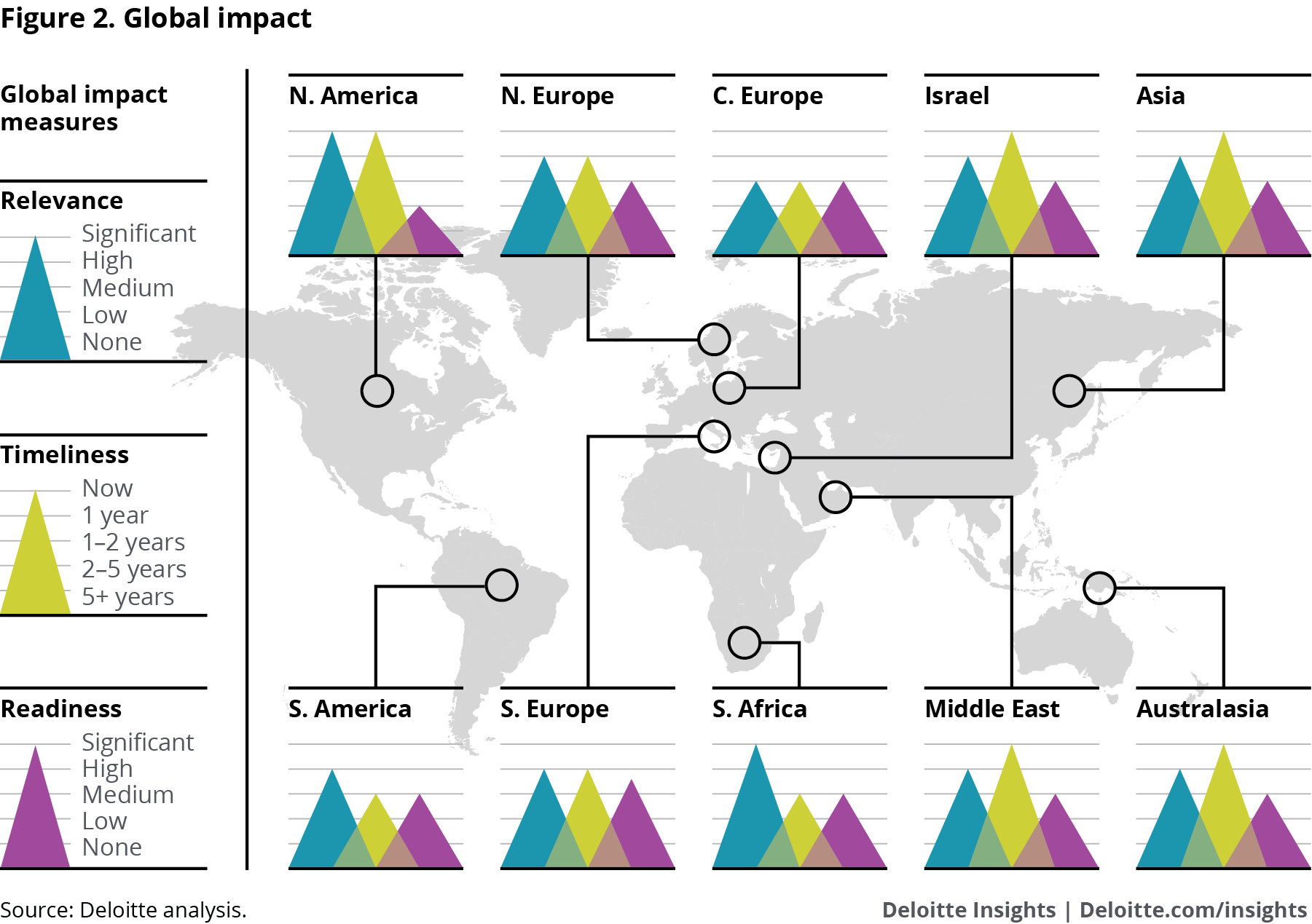

Global impact

Where do you start?

Though some pioneering organizations may be preparing to take their blockchain use cases and PoCs into production, no doubt many are less far down the adoption path. To begin exploring blockchain’s commercialization potential in your organization, consider taking the following foundational steps:

- Determine if your company actually needs what blockchain offers. There is a common misconception in the marketplace that blockchain can solve any number of organizational challenges. In reality, it can be a powerful tool for only certain use cases. As you chart a path toward commercialization, it’s important to understand the extent to which blockchain can support your strategic goals and drive real value.

- Put your money on a winning horse. Examine the blockchain uses cases you currently have in development. Chances are there are one or two designed to satisfy your curiosity and sense of adventure. Deep-six those. On the path to blockchain commercialization, focusing on use cases that have disruptive potential or those aligned tightly with strategic objectives can help build support among stakeholders and partners and demonstrate real commercialization potential.

- Identify your minimum viable ecosystem. Who are the market players and business partners you need to make your commercialization strategy work? Some will be essential to the product development life cycle; others will play critical roles in the transition from experimentation to commercialization. Together, these individuals comprise your minimum viable ecosystem.

- Become a stickler for consortium rules. Blockchain ecosystems typically involve multiple parties in an industry working together in a consortium to support and leverage a blockchain platform. To work effectively, consortia need all participants to have clearly defined roles and responsibilities. Without detailed operating and governance models that address liability, participant responsibilities, and the process for joining and leaving the consortium, it can become more difficult—if not impossible—to make subsequent group decisions about technology, strategy, and ongoing operations.

- Start thinking about talent—now. To maximize returns on blockchain investments, organizations will likely need qualified, experienced IT talent who can manage blockchain functionality, implement updates, and support participants. Yet as interest in blockchain grows, organizations looking to implement blockchain solutions may find it increasingly challenging to recruit qualified IT professionals. In this tight labor market, some CIOs are relying on technology partners and third-party vendors that have a working knowledge of their clients’ internal ecosystems to manage blockchain platforms. While external support may help meet immediate talent needs and contribute to long-term blockchain success, internal blockchain talent—individuals who accrue valuable system knowledge over time and remain with an organization after external talent has moved on to the next project—can be critical for maintaining continuity and sustainability. CIOs should consider training and developing internal talent while, at the same time, leveraging external talent on an as-needed basis.

BOTTOM LINE

With the initial hype surrounding blockchain beginning to wane, more companies are developing solid use cases and exploring opportunities for blockchain commercialization. Indeed, a few early adopters are even pushing PoCs into full production. Though a lack of standardization in technology and skills may present short-term challenges, expect broader adoption of blockchain to advance steadily in the coming years as companies push beyond these obstacles and work toward integrating and coordinating multiple blockchains within a single value chain.

Authors

Eric Piscini is a principal with Deloitte Consulting LLP and is based in Atlanta.

Darshini Dalal is a technology strategist with Deloitte Consulting LLP’s Technology, Strategy and Transformation practice, based in Boston.

David Mapgaonkar is a principal with Deloitte and Touche LLP’s cyber risk services and is based in San Jose, Calif.

Prakash Santhana is a managing director with Deloitte Transactions and Business Analytics LLP, based in New York.

7. Yêu cầu về API: Từ mối quan tâm về Công nghệ Thông tin đến yêu cầu của kinh doanh

technology assets as, well, assets?

The “API imperative” involves strategically deploying services and platforms for use both within and beyond the enterprise.

Eli Whitney’s interchangeable rifle parts gave way to Henry Ford’s assembly lines, which ushered in the era of mass production. Sabre transformed the airline industry by standardizing booking and ticketing processes—which in turn drove unprecedented collaboration. Payment networks simplified global banking, with SWIFT and FIX becoming the backbone of financial exchanges, which in turn made dramatic growth in trade and commerce possible.

The same concept manifests in the digital era as “platforms”—solutions whose value lies not only in their ability to solve immediate business problems but in their effectiveness as launching pads for future growth. Look no further than the core offerings of global digital giants, including Alibaba, Alphabet, Apple Inc., Amazon, Facebook, Microsoft, Tencent, and Baidu.

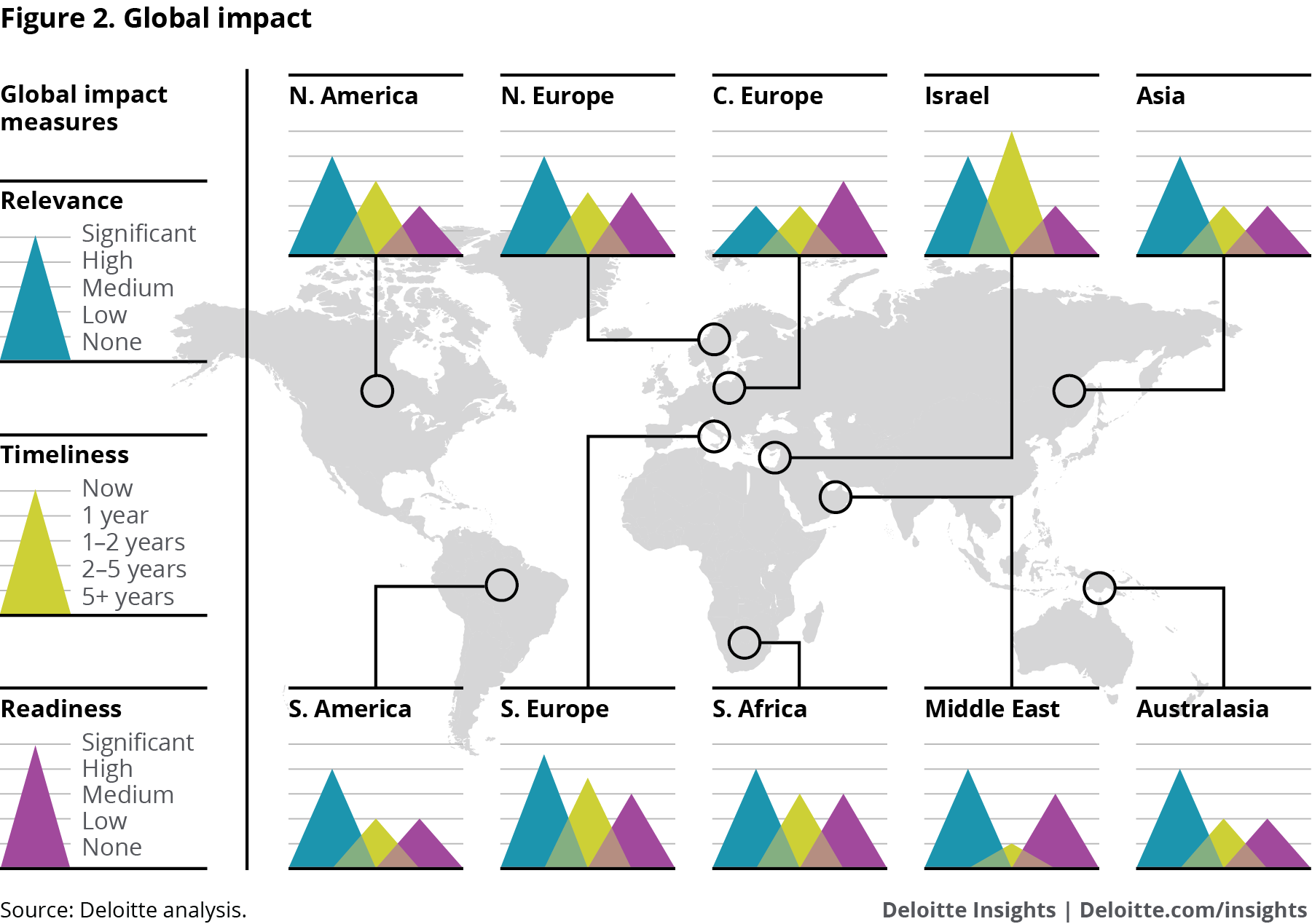

In the world of information technology, application programming interfaces (APIs) are one of the key building blocks supporting interoperability and design modularity.

“API economy.”1 This growth continues apace: As of early 2017, the number of public APIs available surpassed 18,000, representing an increase of roughly 2,000 new APIs over the previous year.2 Across large enterprises globally, private APIs likely number in the millions.

A fresh look

good API design also introduced controls to help manage their own life cycle, including:

- Versioning. The ability to change without rendering older versions of the same API inoperable.

- Standardization. A uniform way for APIs to be expressed and consumed, from COM and CORBA object brokers to web services to today’s RESTful patterns.

- API information control. A built-in means for enriching and handling the information embodied by the API. This information includes metadata, approaches to handling batches of records, and hooks for middleware platforms, message brokers, and service buses. It also defines how APIs communicate, route, and manipulate the information being exchanged.

Over the next 18 to 24 months, expect many heretofore cautious companies to embrace the API imperative—the strategic deployment of application programming interfaces to facilitate self-service publishing and consumption of services within and beyond the enterprise.

The why to the what

both strategically and culturally, to create and consume APIs is key to achieving business agility, unlocking new value in existing assets, and accelerating the process of delivering new ideas to the market.

revitalizing a legacy system with modern APIs encapsulates intellectual property and data contained within that system, making this information reusable by new or younger developers who might not know how to use it directly (and probably would not want to).

APIs’ potential varies by industry and the deploying company’s underlying strategy. In a recent in-depth study of API use in the financial services sector, Deloitte, in collaboration with the Association of Banks and the Monetary Authority in Singapore, identified 5,636 system and business processes common to financial services firms, mapping them to a manageable collection of 411 APIs

Support from the top

As companies evolve their thinking away from project- to API-focused development, they will likely need to design management programs to address new ways of:

- Aligning budgeting and sponsorship. Embed expectations for project and program prioritization to address API concerns, while building out shared API-management capabilities.

- Scoping to identify common reusable services. Understand which APIs are important and at what level of granularity they should be defined; determine appropriate functionality trade-offs of programmatic ambitions versus immediate project needs.

- Balancing comprehensive enterprise planning with market need. In the spirit of rapid progress, avoid the urge to exhaustively map potential APIs or existing interface and service landscapes. Directionally identifying high-value data and business processes, and then mapping that list broadly to business’s top initiative priorities, can help prevent “planning paralysis” and keep your API projects moving.

- Incenting reuse before “building new.” Measure and reward business and technology resources for taking advantage of existing APIs with internal and external assets. To this end, consider creating internal/external developer forums to encourage broader discovery and collaboration.

- Staffing new development initiatives to enable the API vision. While IT should lead the effort to create effective API management programs, it shouldn’t be that function’s sole responsibility. Nor should IT be expected to build and deliver every API integration. Consider, instead, transforming an existing shared-services center of excellence (COE) that involves the lines of business. Shifting from a COE mentality that emphasizes centralized control of all shared services to a federated center for enablement (C4E) approach—tying in stakeholders and development resources enterprise-wide—can help organizations improve API program scalability and management effectiveness.

Enterprise API management

As your ambitions evolve, explore how one or more of the following technology layers can help you manage APIs more strategically throughout their life cycle:

- API portal: a means for developers to discover, collaborate, consume, and publish APIs.

- API gateway: a mechanism that allows consumers to become authenticated and to “contract” with API specifications and policies that are built into the API itself.

- API brokers: enrichment, transformation, and validation services to manipulate information coming to/from APIs, as well as tools to embody business rule engines, workflow, and business process orchestration on top of underlying APIs.

- API management and monitoring: a centralized and managed control level that provides monitoring, service level management, SDLC process integration, and role-based access management across all three layers above.

Tomorrow and beyond

The API imperative trend is a strategic pillar of the reengineering technology trend discussed earlier in Tech Trends 2018. As with reengineering technology, the API imperative embodies a broader commitment not only to developing modern architecture but to enhancing technology’s potential ROI.

It offers a way to make broad digital ambitions actionable, introducing management systems and technical architecture to embody a commitment toward business agility, reuse of technology assets, and potentially new avenues for exposing and monetizing intellectual property.

Skeptic’s corner

Misconception: APIs have been around for a long time

Reality:

developers are following Silicon Valley’s lead by reimagining core systems as microservices, building APIs using modern RESTful architectures, and taking advantage of robust, off-the-shelf API management platforms.

Increasingly, organizations are deploying a microservices approach for breaking down systems and rebuilding them as self-contained embodiments of business rules.

Microservices look to break larger applications into small, modular, independently deployable services. This approach turns the rhetoric of SOA into a modernized application architecture and can magnify APIs’ impacts.

REST stands for “representational state transfer.” APIs built according to REST architectural standards are stateless and offer a simpler alternative to some SOAP standards.

API management platforms have evolved to complement the core messaging, middleware, and service bus offerings from yesteryear.

Misconception: Project-based execution is cheaper and faster. I don’t have time to design products.

Reality: understanding cross-project requirements and designing for reuse, your costs—in both time and budget—become leveraged, and the value you create compounds over time.

Consider subsidizing those investments so that business owners and project sponsors don’t feel as though they are being taxed. Also, look for ways to reward teams for creating and consuming APIs.

Misconception: I don’t have the executive sponsorship I need to take on an API transformation. If I don’t sell it up high and secure a budget, it’s not going to work.

Reality: You don’t have to take on a full-blown API transformation project immediately.

Lessons from the front lines

AT&T’S LEAN, MEAN API MACHINE

The first step was application rationalization, which leaders positioned as an enterprise-wide business initiative. In the last decade, the IT team reduced the number of applications from 6,000-plus to 2,500, with a goal of 1,500 by the year 2020.

AT&T made the API platform the focus of its solutions architecture team, which fields more than 3,000 business project requests each year and lays out a blueprint of how to architect each solution within the platform.

In the beginning, they seeded the API platform by building APIs to serve specific business needs. Over time, the team shifted from building new APIs to reusing them.

The next step in AT&T’s transformation is a microservices journey. The team is taking monolithic applications with the highest spend, pain points, and total cost of ownership, and turning them and all the layers—UI/UX, business logic, workflow, and data, for example—into microservices.

The API and microservices platform will deliver a true DevOps experience (forming an automated continuous integration/continuous delivery pipeline) supporting velocity and scalability to enable speed, reduce cost, and improve quality.

The platform will support several of AT&T’s strategic initiatives: artificial intelligence, machine learning, cloud development, and automation, among others.

THE COCA-COLA CO.: APIS ARE THE REAL THING

Embracing digital, a goal set by the organization’s new CEO, James Quincy. The enterprise architecture team found itself well positioned for the resulting IT modernization push, having already laid the foundation with an aggressive API strategy.

The team leveraged Splunk software to monitor the APIs’ performance; this enabled them to shift from being reactive to proactive, as they could monitor performance levels and intervene before degradation or outages occurred.

The organization is undergoing a systemwide assessment to gauge its readiness in five areas: data, digital talent, automation innovation, cloud, and cyber.

The modernization program first targeted legacy systems for Foodservice, one of Coca-Cola’s oldest businesses.

STATE OF MICHIGAN OPTIMIZES RESOURCES THROUGH REUSE

The State of Michigan’s Department of Technology, Management and Budget (DTMB) provides administrative and technology services and information for departments and agencies in the state government’s executive branch.

When the Michigan Department of Health and Human Services (MDHHS) needed to exchange Medicaid-related information across agencies in support of legislative changes mandated by the Affordable Care Act, DTMB implemented an enterprise service bus and established a reusable integration foundation.

An API layer would allow for reuse and scalability, as well as provide operational stability through service management, helping to prevent outages and performance degradation across the system by monitoring and limiting service consumers.

The first step was to expand the enterprise service bus to enable the cloud-based portal to leverage existing state assets.

Development time has decreased by leveraging existing enterprise shared services such as a master person index and address cleansing.

Reaction to the pilot has been positive, and the faster time to market, improved operational stability, and data quality are already yielding benefits to the consumers.

CIBC: BUILDING THE BANK OF THE FUTURE

the Canadian Imperial Bank of Commerce (CIBC), a 150-year-old institution, is building new capabilities to help it meet customers’ increasingly sophisticated needs.

Building a platform for integration is not new to CIBC, which has thousands of highly reusable web services running across its platform. But the team recognized that the current SOA-based model is being replaced by a next-gen architecture—one based on REST-ful APIs combined with a microservices architecture.

“We envision an API/microservices-based approach as the heart of the Global Open Banking movement,” Fedosoff says. “Financial services firms will look to open up capabilities, and as a result, will need to develop innovative features for clients and effortless journeys for clients. APIs may be a smart way to do it.”

My take

WERNER VOGELS, VICE PRESIDENT AND CHIEF TECHNOLOGY OFFICER

AMAZON.COM

AMAZON.COM

. We ended up with around 600 to 800 services.

After enjoying several years of increased velocity, we observed productivity declining again. Engineers were spending more and more time on infrastructure: managing databases, data centers, network resources, and load balancing.

This led to the build-out of the technical components that would become Amazon Web Services (AWS).

Amazon is a unique company. It looks like a retailer on the outside, but we truly are a technology company.

We hire the best engineers and don’t stand in their way: If they decide a solution is best, they are free to move forward with it. To move fast, we removed decision-making from a top-down perspective—engineers are responsible for their teams, their roadmaps, and their own architecture and engineering; that includes oversight for reuse of APIs. Teams are encouraged to do some lightweight discovery to see whether anybody else has solved parts of the problems in front of them, but we allow some duplication to happen in exchange for the ability to move fast.

We realized this technology could help Internet-scale companies be successful, and it completely transformed the technology industry.

The shift to APIs enabled agility, while giving us much better control over scaling, performance, and reliability—as well as the cost profile—for each component. What we learned became, and remains, essential to scaling the business as we continue to innovate and grow.

Risk implications

Today’s API imperative is part of a broader move by the enterprise to open architectures—exposing data, services, and transactions in order to build new products and offerings and also to enable more efficient, newer business models.

Cyber risk should be at the heart of an organization’s technology integration and API strategy.

An API built with security in mind from the start can be a more solid cornerstone of every application it enables; done poorly, it can multiply application risks. In other words, build it in, don’t bolt it on:

- Verify that your API developers, both internal and third-party, employ strong identity authentication, authorization, and security-event logging and monitoring practices.

- Build in second-level factors of authentication and in-memory, in-transit, and at-rest data encryption methods when high-risk data sets or environments are involved.

- Evaluate and rigorously test the security of third-party APIs you leverage.

- Clearly understand the exposure and technical security requirements of public versus private APIs, and apply enhanced security due diligence and monitoring considerations on your public API set.

- Allocate enough time to conduct API unit and integration security testing exercises to detect and fix potential security vulnerabilities. Lack of credential validation, data type checking, data validation, improper error handling, insufficient memory overflow handling, and privilege escalation are just a few examples of issues on which hackers can capitalize.

Global impact

Where do you start?

Viewed from the starting block, an API transformation effort may seem daunting, especially for CIOs whose IT environments include legacy systems and extensive technical debt. While the following steps do not constitute a detailed strategy, they can help lay the groundwork for the journey ahead:

- Embrace an open API arbitrage model. Don’t waste your time (and everyone else’s) trying to plot every aspect of your API imperative journey. Instead, let demand drive project scope, and let project teams and developers determine the value of APIs being created based on what they are actively consuming. That doesn’t mean accepting a full-blown laissez-faire approach, especially as the culture of the API imperative takes root. Teams should have to justify decisions not to reuse. Moreover, you might have to make an example of teams that ignore reuse guidelines. That said, make every effort to keep the spirit of autonomy alive within teams, and let the best APIs win.

- Base API information architecture design on enterprise domains. The basic API information architecture you develop will provide a blueprint for executing an API strategy, designing and deploying APIs to deliver the greatest value, and developing governance and enforcement protocols. But where to begin? To avoid the common trap of over-engineering API architecture, consider basing your design on existing enterprise domains—for example, sales and marketing, finance, or HR—and then mapping APIs to the services that each domain can potentially expose. Approaching architecture design this way can help avoid redundancies, and provide greater visibility into APIs’ effectiveness in driving value and supporting domain-specific strategies.

- Build it and they won’t come. Driving API consumption is arguably more important than creating APIs, a point often lost on organizations as they embrace the API imperative trend. To build an organizational culture that emphasizes API consumption, start by explaining the strategic importance of consumption to line-of-business leaders and their reports, and asking for their support. Likewise, create mechanisms for gauging API consumption and for rewarding teams that embrace reuse principles. Finally, share success stories that describe how teams were able to orchestrate outcomes from existing services, or rapidly create new services by building from existing APIs.

- Determine where microservices can drive value. If you are beginning your API transformation journey, you probably have multiple services that could be managed or delivered more effectively if they were broken down into microservices. Likewise, if you already have API architecture in place, you may be able to gain efficiencies and scalability by atomizing certain platforms into microservices. To determine whether this approach is right for your company, ask yourself a few questions: Do you have a large, complex code base that is currently not reusable? Are large teams required to develop or support an application? Are regular production releases required to maintain or enhance application functionality? If you answered yes to any or all of the above, it may be time to begin transitioning to microservices.

- Define key performance indicators (KPIs) for all exposed services. Deploying an API makes a service reusable. But is that service being reused enough to justify the maintenance required to continue exposing it? By developing KPIs for each service, you can determine how effectively API platforms are supporting the goals set forth in your API strategy. If the answer is “not very effective,” then KPIs may also be able to help you identify changes to make that can improve API impact.

- Don’t forget external partners. APIs should be built for consumers, partners, and internal lines of business. For external partners, including the developer community, it is important to develop and provide necessary support in terms of documentation, code samples, testing, and certification tools. Without it, collaboration and the innovation it drives rarely take off.

Bottom line

As pioneering organizations leading the API imperative trend have discovered, companies can make more money by sharing technology assets than by controlling them. Embracing this trend fully will require rethinking long-held approaches to development, integration, and governance. But clinging to the old ways is no longer an option. The transition from independent systems to API platforms is already well under way. Don’t be the last to learn the virtues of sharing.

Authors

Larry Calabro is a principal with Deloitte Consulting LLP and is based in Boston.

Chris Purpura is a managing director with Deloitte Consulting LLP, based in San Francisco.

Vishveshwara Vasa is a managing director with Deloitte Digital and is based in Phoenix.

Arun Perinkolam is a principal with Deloitte and Touche LLP’s Cyber Risk Services practice, based in San Jose, Calif.

Thử nghiệm Donate

Nếu thấy hay thì có thể Donate cho tác giả bằng cách chuyển khoản và để lại tin nhắn theo cú pháp:

Donation - Tên người gửi tiền - Người suy nghĩ - Lời nhắn.

Trần Việt Anh

STK: 0451000364912, ngân hàng Vietcombank chi nhánh Thành Công, Hà Nội

Cảm ơn đã đọc, và nhớ Donate !

Khoa học - Công nghệ

/khoa-hoc-cong-nghe

Bài viết nổi bật khác

- Hot nhất

- Mới nhất